Converting a photograph into a 3D model traditionally requires photogrammetry software, dozens of overlapping images, and significant processing time. Photogrammetry -- the science of extracting 3D measurements from photographs -- has long demanded specialized expertise and hardware. Pocket 3D AI changes that equation by generating a textured 3D model from a single image in seconds, directly in your browser, with no software installation.

Try Pocket 3D AI free

Upload a photo or select one of the examples below to generate a 3D model instantly.

How to use it:

- Upload any image or select from the example gallery.

- Click Generate to start the two-stage reconstruction.

- Preview the 3D model interactively in the viewer -- rotate, zoom, and inspect.

- Download the result as a GLB file (textured 3D model) or STL file (for 3D printing).

Key Takeaways

- Pocket 3D AI generates a textured 3D model from a single photograph in seconds, entirely in the browser — no account, GPU, or software installation required.

- The tool uses a two-stage neural pipeline (sparse structure generation followed by structured latent generation) based on the TRELLIS framework to infer geometry and texture from one viewpoint.

- Output files are downloadable as GLB (for 3D viewers, game engines, and web apps) or STL (ready for 3D printing), supporting product visualization, game asset prototyping, and creative projects.

- Because AI infers the geometry it cannot see from a single image, the output is suited for visualization and prototyping rather than precision measurement.

- For applications requiring dimensional accuracy — accident reconstruction, construction documentation, surveying — SkyeBrowse's full videogrammetry platform delivers 0.1 inch accuracy from continuous video.

Contents

- What is Pocket 3D AI?

- How does AI image-to-3D conversion work?

- What can you do with the 3D models?

- When do you need full-scale 3D reconstruction?

What is Pocket 3D AI?

Pocket 3D AI is a free browser-based tool built by SkyeBrowse that converts a single photograph into a textured 3D model. Upload any image -- a building, a vehicle, a piece of furniture, a product -- and the AI generates a full 3D reconstruction with downloadable GLB and STL files. The tool uses a two-stage neural reconstruction pipeline to infer geometry and texture from a single viewpoint.

Unlike traditional photogrammetry workflows that require capturing dozens or hundreds of overlapping photos from multiple angles, Pocket 3D AI works from one image. The tradeoff is that AI-inferred geometry fills in what the camera cannot see, so the output is best suited for visualization, conceptual modeling, 3D printing prototypes, and creative projects rather than precision measurement.

The tool runs entirely in the browser. No account, no download, no GPU required on your end -- processing happens in the cloud.

How does AI image-to-3D conversion work?

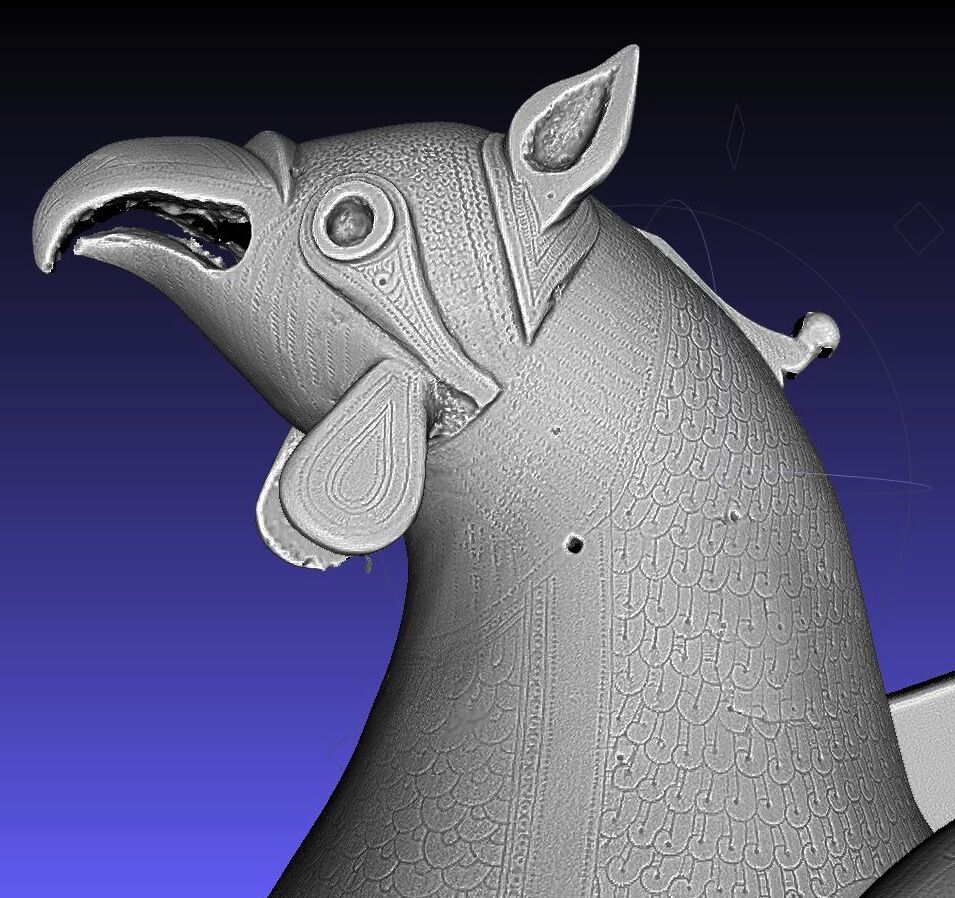

Pocket 3D AI uses a two-stage neural pipeline. The first stage (Sparse Structure Generation) predicts the rough 3D shape and spatial layout from the input image. The second stage (Structured Latent Generation) refines geometry and applies photorealistic textures. The result is a mesh with UV-mapped textures that can be exported as GLB or STL, according to the underlying TRELLIS image-to-3D framework.

The model has been trained on large datasets of 3D objects paired with their 2D photographs. When you upload an image, the AI recognizes the object category and structural cues -- shadows, perspective lines, surface curvature -- to predict what the object looks like from angles the photo does not show.

You can adjust generation parameters including guidance strength (how closely the output follows the input image), sampling steps (more steps produce finer detail), and mesh simplification (reducing polygon count for lighter files). For most images, the default settings produce strong results.

What can you do with the 3D models?

GLB files open in any 3D viewer, game engine, or web-based 3D application -- including Blender, Unity, Unreal Engine, and Three.js. STL files are ready for 3D printing on any consumer or professional printer. Common use cases include product visualization, game asset prototyping, architectural concept models, educational demonstrations, and creative art projects.

Practical applications include:

- Product visualization: Convert a product photo into a 3D model for e-commerce previews or AR try-on experiences.

- 3D printing: Generate an STL from a reference photo and print a physical prototype in hours.

- Game development: Create quick concept assets from reference images before investing in manual modeling.

- Education: Students and instructors can demonstrate 3D reconstruction concepts without expensive software licenses.

- Creative projects: Artists use AI-generated 3D models as starting points for sculpting, texturing, and scene composition.

When do you need full-scale 3D reconstruction?

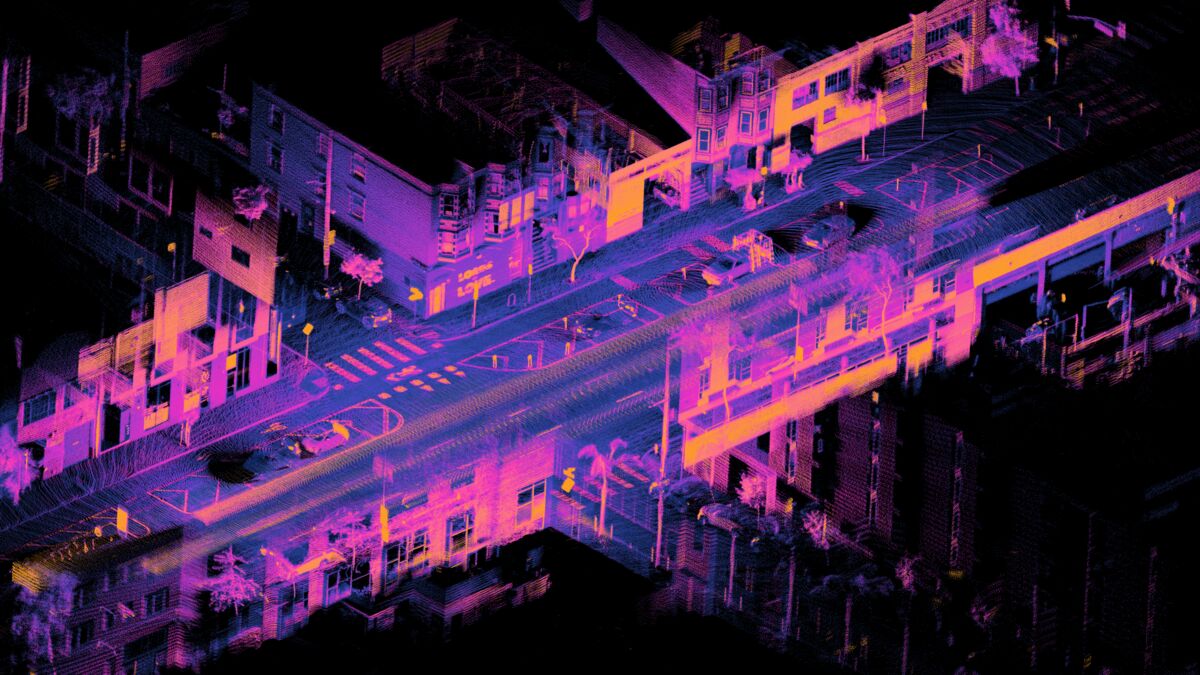

Pocket 3D AI generates visually convincing models from a single image, but professional applications requiring dimensional accuracy -- accident reconstruction, construction documentation, surveying, or forensic evidence -- require multi-angle capture and calibrated processing. SkyeBrowse's core videogrammetry platform builds measurement-grade 3D models from continuous video with accuracy ranging from 2-6 inches (Lite) to 0.1 inches (Premium Advanced).

The difference comes down to input data. A single photograph contains limited depth information, so AI must infer geometry. Videogrammetry -- the process of extracting 3D measurements from continuous video -- captures real geometry from thousands of frames, producing models that support court-admissible measurements, volume calculations, and GIS-referenced exports.

If your workflow requires accuracy for drone mapping, accident reconstruction, construction monitoring, or surveying, SkyeBrowse's full platform delivers professional-grade results via Universal Upload on app.skyebrowse.com.