Collision reconstruction is the scientific process of analyzing physical, electronic, and environmental evidence to determine how a traffic crash occurred — including vehicle speeds, positions, and driver actions before and after impact. Traditionally performed by trained accident reconstructionists using total stations, measuring wheels, and paper diagrams, the discipline has shifted significantly as drone mapping and photogrammetry-based tools have entered the field. This article walks through the full reconstruction workflow — from scene arrival through court-ready documentation — and explains where modern 3D capture technology fits in.

Key Takeaways

- Collision reconstruction requires documented evidence from four domains: physical (skid marks, debris), vehicle (damage, EDR data), environmental (road geometry, signage), and witness/electronic records.

- Accurate spatial measurements are the foundation of every reconstruction — position errors of even a few feet can change calculated pre-impact speed by 5–10 mph.

- Drone-based videogrammetry, where aerial video is processed into a georeferenced 3D model, reduces scene documentation time from several hours to under 10 minutes.

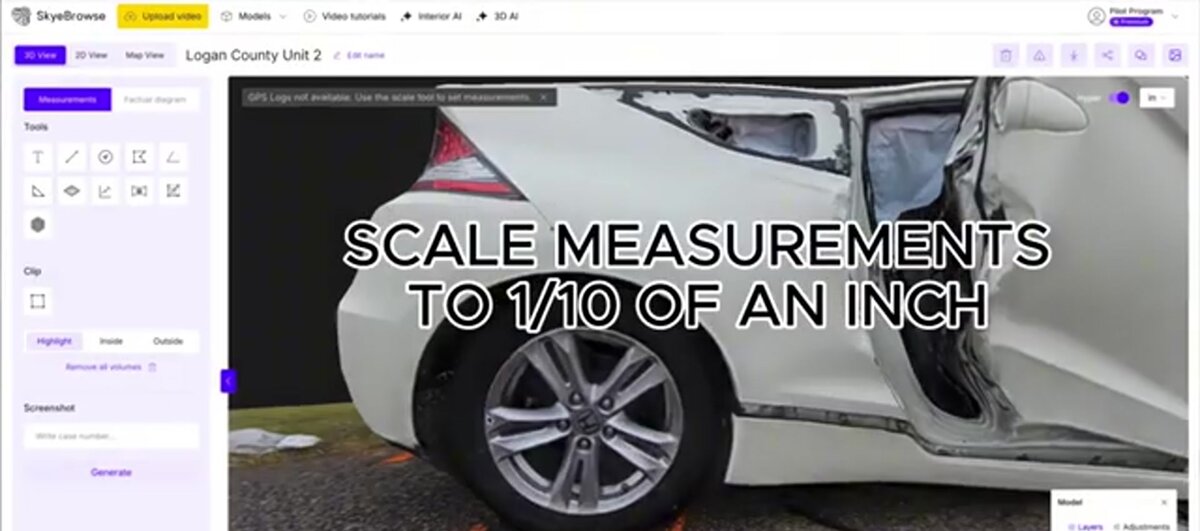

- SkyeBrowse's cloud platform produces court-admissible 3D crash scene models at up to 0.1-inch accuracy without requiring a laser scanner or dedicated photogrammetry hardware.

- Over 1,200 public safety agencies use SkyeBrowse, including agencies that have entered drone-generated 3D models into evidence at trial.

Contents

- What does a collision reconstructionist actually do at the scene?

- What evidence is collected in crash reconstruction?

- How are crash scenes measured and documented?

- How does drone mapping change the reconstruction workflow?

- How is a 3D crash scene model used in analysis and court?

- FAQ

What does a collision reconstructionist actually do at the scene?

A collision reconstructionist arrives at a crash scene — often after primary responders have cleared life safety hazards — and takes systematic control of the physical evidence environment. Their job is to record everything: positions of vehicles, skid patterns, fluid trails, debris fields, road geometry, and sight lines. They work against a clock because roads need to reopen and evidence degrades, so speed and completeness are both priorities. The final product of a reconstruction is a defensible, physics-based account of how the collision unfolded, usable in civil litigation, criminal prosecution, or agency safety review.

The role requires both field and analytical skills. On scene, reconstructionists manage evidence photography, coordinate with patrol officers, and operate measurement equipment. Back at the office, they apply collision dynamics formulas — drag factors, momentum conservation, energy methods — to the documented measurements to calculate speeds and timelines. Professional certification through organizations like ACTAR (Accreditation Commission for Traffic Accident Reconstruction) or training through IPTM (Institute of Police Technology and Management) provides the methodological foundation courts expect when expert testimony is offered.

The reconstructionist's work product — a technical report, scale diagram, and supporting exhibits — must withstand cross-examination by opposing experts. That means every measurement needs a documented source, every calculation needs a stated methodology, and every exhibit needs a clear chain of custody.

What evidence is collected in crash reconstruction?

Reconstruction evidence falls into four categories: physical (skid marks, gouge marks, debris scatter, fluid trails), vehicle (structural damage, airbag deployment, event data recorder output), environmental (road surface, grade, sight distance, signal timing), and documentary (surveillance video, 911 recordings, cell phone records, witness statements). Each category feeds a different part of the physics analysis. Missing evidence from any category forces the reconstructionist to rely on estimates, which weakens the final opinion.

Physical evidence on the roadway is especially time-sensitive. Tire marks, yaw marks from ABS-equipped vehicles, and fluid stains begin degrading the moment traffic and weather touch the scene. NHTSA research consistently emphasizes that event data recorder (EDR) data — now present in most vehicles manufactured after 2013 — provides the most reliable pre-crash speed readings, but EDR data must be correlated with physical evidence to establish vehicle positions at the recorded timestamps.

Vehicle damage documentation includes both photographic and measurement-based recording of crush depth and distribution, which feeds energy-based speed calculations. Environmental evidence — road grade, surface friction coefficient, lane widths — must be captured while investigators still have scene access, because these conditions change with road resurfacing or sign replacement. For a broader look at the tools available for documenting all of this evidence systematically, see our guide to crime scene documentation equipment.

How are crash scenes measured and documented?

Traditional crash scene documentation relies on a total station (an electronic theodolite combined with a distance meter), a measuring wheel, or a GPS receiver to establish the coordinates of each evidence point. The reconstructionist shoots dozens to hundreds of points — vehicle corners, debris items, tire mark endpoints — and links them in diagramming software to produce a scale drawing. This process is thorough but slow: a moderately complex scene can take three to six hours to document with a two-person team.

Total station measurement is still the legal gold standard in many jurisdictions and offers sub-inch accuracy on a point-by-point basis. The limitation is throughput: every point must be individually sighted, and the instrument must maintain line-of-sight to each target. Complex intersections with multiple vehicles, significant debris scatter, and elevation changes test the limits of the approach. Our total station vs drone mapping comparison covers the accuracy and time tradeoffs in detail for investigators evaluating both options.

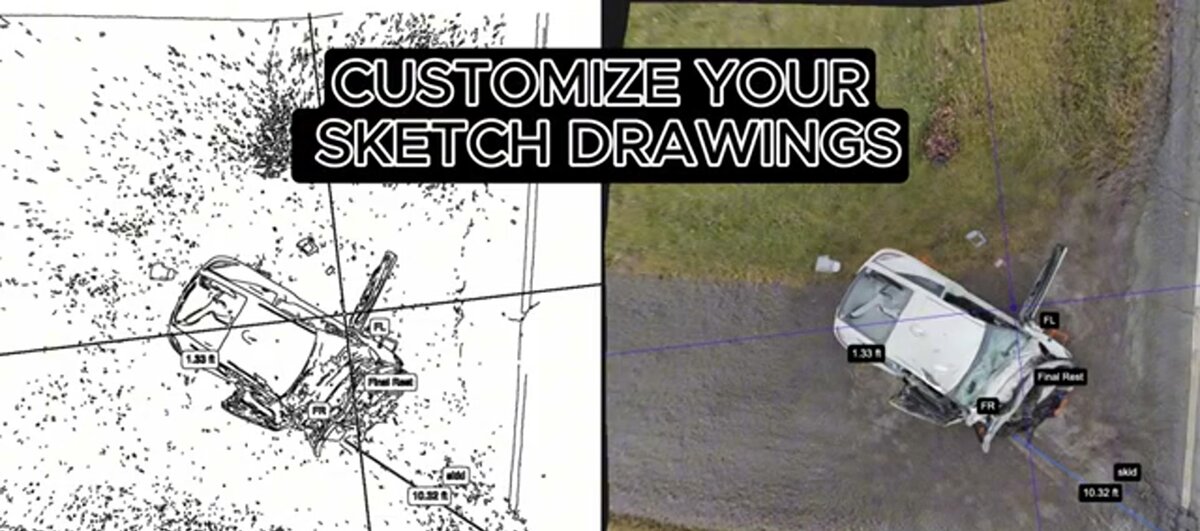

Sketch diagrams produced from total station data are accurate but two-dimensional. They capture spatial relationships but lose height information — which matters for vehicle roll analysis, overhead sign positions, and sight-line calculations. Photogrammetry (the technique of deriving 3D measurements from overlapping photographs) addresses this gap by capturing full volumetric data from every photographed surface. Videogrammetry, a related discipline where 3D geometry is extracted from continuous video footage rather than still images, extends the same principle to drone video and enables scene-wide capture in a single flight pass.

How does drone mapping change the reconstruction workflow?

A drone equipped with a stabilized video camera can capture a complete crash scene — all vehicles, debris, tire marks, and road geometry — in a single orbital flight lasting five to ten minutes. That video is uploaded to a cloud photogrammetry or videogrammetry platform, which processes it into a georeferenced 3D point cloud and mesh model. The reconstructionist then takes measurements directly inside the model rather than at the physical scene, leaving the road open faster and capturing spatial data that a total station cannot replicate.

The practical impact on road clearance time is significant. A lane blocked for two extra hours during peak commute periods generates economic costs that local agencies track closely. Getting investigators off the road faster while collecting more comprehensive spatial data is the core operational argument for drone-based documentation. Drone 3D mapping technology produces outputs — point clouds, orthomosaics, and textured 3D meshes — that integrate directly into the reconstruction software investigators already use.

SkyeBrowse is a cloud-based videogrammetry platform designed specifically for this workflow. An investigator flies a supported drone in orbits around the crash scene, uploads the video through the SkyeBrowse app or Universal Upload interface, and receives a 3D model ready for measurement. No desktop processing hardware is required — the model is available through a browser at app.skyebrowse.com. The platform supports accuracy tiers from approximately 2–6 inches (Lite) to 0.25 inch (Premium, 8K processing) to 0.1 inch (Premium Advanced, 16K processing with AI moving-object removal), giving investigators control over the accuracy-cost tradeoff based on case severity. More than 1,200 agencies worldwide have adopted the platform, including traffic investigation units that routinely enter SkyeBrowse models as trial exhibits.

For a direct comparison of the available accident reconstruction software options — covering both the documentation and analysis phases — see our dedicated comparison guide.

How is a 3D crash scene model used in analysis and court?

A georeferenced 3D model becomes a permanent spatial record of the scene at the moment of documentation. Investigators take measurements from the model to feed into speed and momentum calculations. In court, the model is presented as a demonstrative exhibit: attorneys, jurors, and expert witnesses can navigate the scene from any angle, zoom to tire mark endpoints, and see vehicle positions in their actual spatial context. A well-documented 3D model eliminates ambiguity about spatial relationships that a 2D diagram cannot resolve.

For analysis, reconstructionists export measurements — distances, vehicle positions, debris scatter radii — and feed them into dedicated crash analysis software alongside EDR data and witness information. The 3D model does not replace the physics calculations; it provides the spatial inputs those calculations require. An accident reconstruction expert retained for litigation will typically review the documentation methodology, the model accuracy tier, and the chain of custody before offering opinions that rely on the model.

For court admission, investigators document the drone model, serial number, calibration status, and flight log alongside the processing metadata from the platform. SkyeBrowse exports include point cloud files (LAZ format) suitable for independent re-measurement by opposing experts, supporting the authentication requirements courts apply under rules like FRE 901. The platform's AWS GovCloud hosting and FedRAMP Moderate authorization address the data security chain-of-custody questions that prosecutors and defense attorneys raise about cloud-processed evidence.

The side-by-side below illustrates the shift from traditional sketch documentation to 3D model output — the same scene, captured by different methods, showing what spatial information each approach preserves and loses.

FAQ

How long does collision reconstruction take?

Scene documentation traditionally takes 2–6 hours with a total station, plus days of office analysis. Drone-based videogrammetry compresses the capture phase to under 10 minutes and delivers a processable 3D model within hours, cutting total scene time dramatically. The road can typically reopen before the model is fully processed.

What qualifications does an accident reconstructionist need?

Most jurisdictions require formal training in crash investigation, physics, and vehicle dynamics. ACTAR offers the industry's primary credential, requiring documented casework, written exams, and continuing education. IPTM provides widely recognized training programs for law enforcement and civilian investigators. Agencies deploying drone documentation should also ensure their pilots hold current Part 107 certification from the FAA.

Is drone 3D evidence admissible in court?

Yes, when properly documented. Courts evaluate digital evidence under standards like FRE 901 (authentication) and Daubert (scientific reliability). Investigators must document the drone model, flight logs, telemetry data, and processing methodology. SkyeBrowse models include embedded metadata and support export of raw point clouds for independent verification — the same data chain required for any other instrumented measurement admitted as evidence.