The photogrammetry industry in 2026 looks nothing like it did five years ago. AI-powered feature matching now cuts reconstruction times by 30–60 percent. Videogrammetry — extracting 3D geometry from continuous drone video rather than planned still-photo grids — has become the default workflow for time-sensitive applications. Edge computing is bringing on-device processing to the field. FAA Remote ID enforcement has reshaped how mapping operations are planned and logged. And smartphone LiDAR is filling gaps where drone deployment is impractical. For a technical breakdown of how aerial photogrammetry itself works, see our aerial photogrammetry guide. This page is updated regularly with the latest photogrammetry news updates from across the industry. This article focuses on the industry shifts that matter most in 2026 — the developments practitioners need to know about today.

Key Takeaways

- AI-powered feature matching has cut photogrammetry reconstruction times by 30–60% on large datasets and now achieves sub-inch accuracy without ground control points — a task that required survey crews and GCP targets just two years ago.

- Videogrammetry has replaced structured photo-grid workflows for time-sensitive applications, compressing field capture to a 3–5 minute orbit and processing to under 10 minutes.

- Edge computing is shifting initial reconstruction off the cloud and onto the drone or a ruggedized field tablet, enabling real-time situational models in areas without connectivity.

- FAA Remote ID enforcement — fully in effect since 2024 — has changed how operators log and geotag mapping flights, with compliance now a standard part of enterprise drone programs.

- Smartphone LiDAR (available on Apple iPhone 12 Pro and later) is increasingly used alongside drone photogrammetry for indoor spaces and close-range detail that drones cannot safely capture.

Contents

- What is driving the shift from photo-based to video-based photogrammetry?

- How is AI changing photogrammetry software in 2026?

- How is edge computing changing field photogrammetry workflows?

- What does FAA Remote ID mean for drone mapping operations?

- How is smartphone LiDAR supplementing drone photogrammetry?

- Which industries are adopting photogrammetry the fastest?

- FAQ

What is driving the shift from photo-based to video-based photogrammetry?

Videogrammetry — applying structure-from-motion algorithms to frames extracted from continuous drone video rather than a pre-planned still-image grid — has become the preferred approach for any application where speed matters more than sub-centimeter precision. The capture phase compresses from a 20–40 minute grid flight to a 3–5 minute freehand orbit, and cloud processing returns a 3D model in under 10 minutes.

For a technical explanation of how the underlying photogrammetry algorithms work — SfM, GSD, overlap requirements, and GCP placement — see our aerial photogrammetry guide.

The operational gap has widened because of how missions actually unfold in the field. A traffic investigator reopening a highway cannot wait hours for a photogrammetry model. A construction superintendent who needs a daily site snapshot cannot schedule a grid flight every morning. Videogrammetry platforms address both constraints by accepting ordinary .MP4 and .MOV files from any drone and processing them in the cloud with no local hardware requirements.

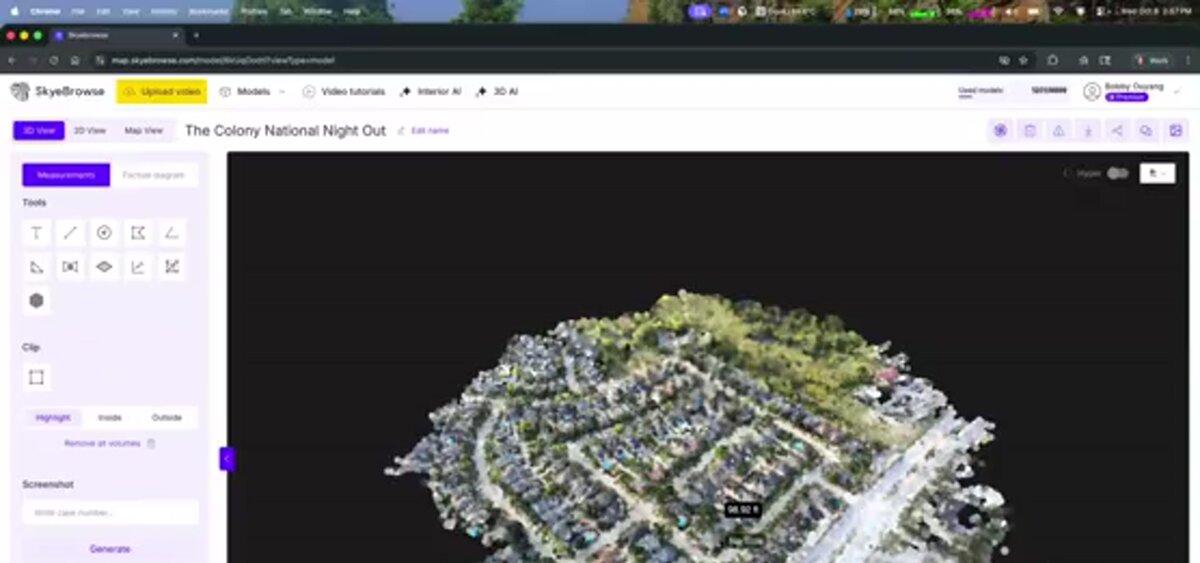

SkyeBrowse, a cloud-based videogrammetry platform used by more than 1,200 agencies worldwide, demonstrates the commercial trajectory: its Universal Upload feature accepts video from DJI drones, action cameras, and cell phones, removing the hardware dependency entirely. The USGS has documented photogrammetric methods in federal UAS programs for years, and the agency's unmanned aircraft systems program increasingly references video-derived datasets alongside traditional photo-based products.

A secondary trend accelerating adoption: the shift toward open export formats. Teams are no longer locked into proprietary ecosystems — LAZ point clouds, GeoTIFF orthomosaics, and GLB meshes are all open standards that load directly into GIS, CAD, BIM, and forensic analysis platforms without vendor-specific converters. This interoperability has made it far easier for agencies to compare platforms and switch without data migration costs.

How is AI changing photogrammetry software in 2026?

AI is improving photogrammetry at two key stages: feature matching during reconstruction and semantic classification of the finished point cloud. Neural-network-based feature detectors find correspondences between frames faster and more reliably than classical algorithms in low-texture scenes — asphalt parking lots, snow-covered fields, and dense vegetation — where traditional methods often fail. The headline result: AI-enhanced processing now achieves sub-inch accuracy without ground control points, a capability that required survey crews and physical GCP targets just two years ago.

Research published in IEEE Transactions on Geoscience and Remote Sensing has tracked the adoption of deep learning into structure-from-motion pipelines, with multiple papers demonstrating 30 to 60 percent reductions in processing time when neural feature matchers replace SIFT-based approaches on large datasets. The accuracy gains are equally significant: AI-assisted bundle adjustment can self-correct GPS drift in drone telemetry, pulling absolute positional accuracy into the sub-inch range without any physical ground markers.

In commercial photogrammetry software, the most visible AI application is moving-object removal. Vehicles, pedestrians, and animals that appear in multiple frames at different positions create reconstruction artifacts — ghosting, floating geometry, and hole-fill errors that degrade model quality. SkyeBrowse's Premium Advanced tier applies AI to identify and suppress these transient elements during processing, producing cleaner models suitable for courtroom evidence and forensic documentation. Post-processing AI also classifies point cloud returns by category — separating ground, structure, vegetation, and moving objects — without the manual filtering that previously required a trained GIS technician.

How is edge computing changing field photogrammetry workflows?

Edge computing — running processing on a device located at or near the capture site rather than sending data to a remote server — is reducing the latency between flight and finished model from minutes to seconds in connected-constrained environments. Ruggedized field tablets and next-generation drone controllers now carry enough GPU headroom to run lightweight reconstruction pipelines on-device, producing a low-resolution situational model before the drone has landed.

The practical impact is clearest in public safety and military applications, where cellular connectivity cannot be guaranteed and even a 5-minute upload wait may be unacceptable. On-device edge processing produces a coarse model usable for perimeter planning or scene orientation, with the higher-resolution cloud version arriving later when connectivity is restored. Some drone manufacturers are integrating edge inference directly into the aircraft's companion computer, so the first preview renders during the flight itself.

For enterprise teams, edge processing also reduces cloud egress costs on high-frame-rate video — a 10-minute 4K flight produces 30–50 GB of raw footage. Running a frame-selection pass on-device before upload cuts the data sent to the cloud by 60–80 percent, which matters at scale when an organization is running hundreds of missions per month.

What does FAA Remote ID mean for drone mapping operations?

FAA Remote ID — the rule requiring drones to broadcast their identity, location, and operator location in real time — has been fully enforced since September 2023. For commercial mapping operations, Remote ID compliance is now a prerequisite for operating in controlled airspace and near populated areas. Enterprise drone programs have had to update fleet hardware, integrate broadcast modules, and revise their flight logging workflows to maintain an auditable record of every mapping mission.

The FAA Remote ID rule applies to all UAS over 0.55 lbs operating in U.S. airspace. Drones manufactured after September 2023 include built-in broadcast capability; older aircraft require an external Remote ID module. For mapping teams, the operational change is mostly administrative — pre-flight checks now include confirming Remote ID broadcast is active, and mission logs should include the Remote ID serial number alongside GPS tracks and imagery.

The secondary effect on photogrammetry workflows is positive: Remote ID logs provide a built-in timestamped record of flight path, which supplements telemetry files (.SRT, .ASS) used for georeferencing. Several platform providers are exploring ways to ingest Remote ID broadcast data as a supplementary georeferencing source, particularly for operations in areas where GNSS signal quality is degraded. Commercial UAV News has covered the regulatory transition extensively, noting that large public safety agencies moved quickly to compliance while smaller independent operators faced a longer adjustment period.

How is smartphone LiDAR supplementing drone photogrammetry?

Apple's LiDAR scanner — available on iPhone 12 Pro and later and all iPad Pro models since 2020 — has given field teams a zero-additional-cost tool for capturing indoor and close-range 3D geometry that drone photogrammetry cannot safely reach. Stairwells, building interiors, vehicle interiors, and confined-space assets are all within LiDAR range. The resulting point clouds are coarser than dedicated terrestrial scanners but sufficient for many documentation and BIM coordination tasks.

The workflow is now common enough to have settled into a hybrid pattern: drone photogrammetry captures the exterior and roof of a structure, while smartphone LiDAR captures the interior. The two datasets are registered to a shared coordinate frame — typically using physical targets or known reference points — and merged into a single unified model. For insurance claims, this eliminates the need to send adjusters inside potentially unsafe structures while still producing interior measurements. For construction, it closes the gap between as-built exterior drone surveys and interior finish coordination.

Apps like Polycam, Matterport Axis, and SLAM-based open-source tools can export LiDAR scans in LAZ and OBJ format, making them directly compatible with the same downstream workflows used for drone photogrammetry outputs. The convergence of indoor LiDAR and outdoor drone photogrammetry is one of the more practical cross-method trends of 2026 — not a replacement for either technology, but a way to cover the full site geometry in a single field visit.

Which industries are adopting photogrammetry the fastest?

Public safety and construction are the fastest-growing segments for drone photogrammetry in 2026. Law enforcement agencies use rapid video-based workflows to document accident scenes and crime scenes without blocking roads for hours. Construction teams deploy photogrammetry for daily progress monitoring, earthwork volume measurement, and as-built verification against design drawings. Both sectors are driven by the same need: actionable spatial data faster than traditional survey methods can provide.

Part 107 commercial drone registrations continued growing in 2025, with public safety and construction among the top sectors, reflecting the broader mainstreaming of drone photogrammetry beyond traditional land surveying firms.

Key industry adoption patterns in 2026:

- Public safety: Over 1,200 law enforcement and fire agencies now use SkyeBrowse for scene documentation. The typical workflow is a 4-minute orbit flight processed to a 3D model in under 10 minutes, enabling road reopening hours earlier than with traditional total-station surveys.

- Construction: Earthwork volume tracking, cut-and-fill analysis, and daily progress snapshots are standard on mid-to-large commercial projects. Photogrammetry deliverables feed directly into BIM coordination workflows.

- Insurance: Roof inspection and property damage documentation after storms use drone photogrammetry to generate defensible measurements without sending adjusters onto damaged structures.

- Infrastructure: Bridge deck inspections, utility corridor surveys, and telecom tower documentation increasingly rely on drone photogrammetry over manual rope-access inspection.

Orthomosaic Mapping News and Developments

Orthomosaic mapping has seen rapid adoption beyond traditional surveying. Construction teams now use weekly drone flights to generate orthomosaics for progress monitoring, comparing current site conditions against BIM design files. Insurance adjusters generate orthomosaics of damaged properties for claims documentation, replacing manual sketches with measurable aerial imagery.

The biggest shift in orthomosaic mapping technology is the move from photo-only to video-based pipelines. Platforms like SkyeBrowse now produce GeoTIFF orthomosaics as a standard output alongside 3D point clouds and meshes, all from a single drone video orbit. For a deeper look at how orthomosaics differ from 3D models, see our orthomosaic guide.

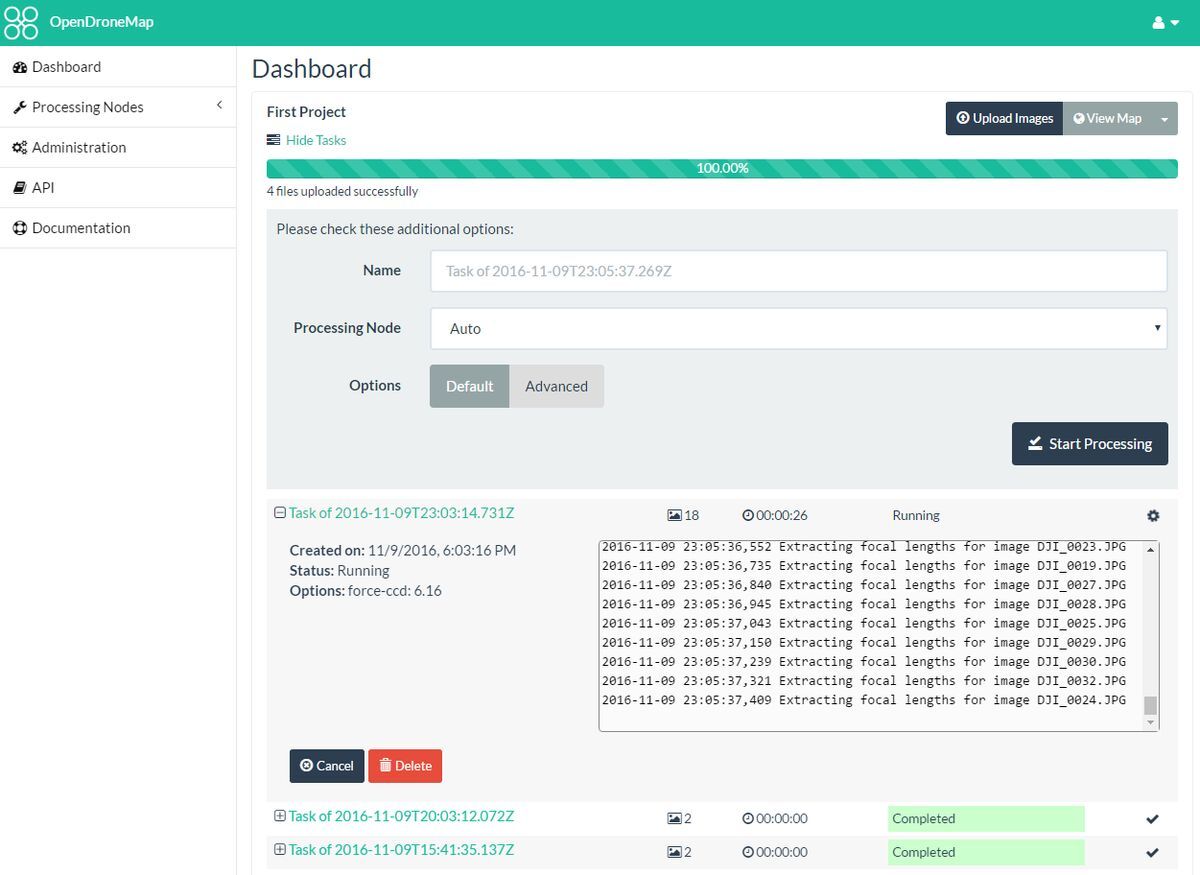

Drone Mapping Software Updates

The drone mapping software market continues consolidating around two processing approaches: cloud-based platforms that handle everything from upload to deliverable, and desktop applications that offer granular control for specialist workflows. Notable developments in 2026 include expanded video input support across multiple platforms and increasing adoption of AI-powered object removal for cleaner 3D reconstructions.

SkyeBrowse's Premium Advanced tier now processes at 16K resolution with AI moving-object removal, delivering 0.1-inch accuracy models suitable for forensic and courtroom use. For a full comparison of current platforms, see our drone mapping software guide.

FAQ

What is the difference between photogrammetry and videogrammetry?

Photogrammetry reconstructs 3D geometry from hundreds of overlapping still photographs captured on a planned grid flight. Videogrammetry extracts frames from continuous video and applies the same structure-from-motion algorithms, compressing capture to a simple orbit and processing to under 10 minutes. For a technical breakdown of how the two methods compare on accuracy, workflow, and coverage area, see our aerial photogrammetry guide.

How is AI changing photogrammetry software in 2026?

AI accelerates feature matching during reconstruction and automates semantic classification of the finished point cloud. The most visible application in commercial platforms is AI-powered moving-object removal, which eliminates vehicles and pedestrians that would otherwise create reconstruction artifacts. Processing times have improved 30 to 60 percent on large datasets compared to classical algorithms.

What industries are adopting photogrammetry the fastest in 2026?

Public safety and construction are the two fastest-growing segments. Law enforcement agencies document accident and crime scenes with video-based photogrammetry workflows in under 10 minutes, while construction teams use it for daily earthwork monitoring and as-built verification. Insurance and infrastructure inspection are close behind, driven by the same need for fast, defensible spatial data without putting personnel in unsafe positions.