Reality capture is the process of measuring a physical environment and converting it into accurate, georeferenced digital data — point clouds, 3D meshes, orthomosaic maps, or full digital twins. The term is a technology category, not a brand: it covers LiDAR scanning, photogrammetry, structured light scanning, and videogrammetry, each suited to different scenes, budgets, and turnaround requirements. This guide breaks down every major method, explains the key accuracy and workflow tradeoffs, and shows where drone-based videogrammetry is reshaping how fast teams in construction, public safety, and insurance can capture and use spatial data.

Key Takeaways

- Reality capture is an umbrella term for any technology — LiDAR, photogrammetry, structured light, or videogrammetry — that converts physical environments into precise digital spatial data.

- "RealityCapture" (capital R, capital C) is a proprietary photogrammetry software product by Epic Games; "reality capture" (lowercase) is the broader field it belongs to.

- Videogrammetry — deriving 3D geometry from video frames rather than individual photographs — is the fastest field-to-model method available today, with some platforms delivering usable models in minutes.

- ASPRS positional accuracy standards and NIST measurement guidance provide the independent benchmarks that define what "accurate" means for any reality capture output.

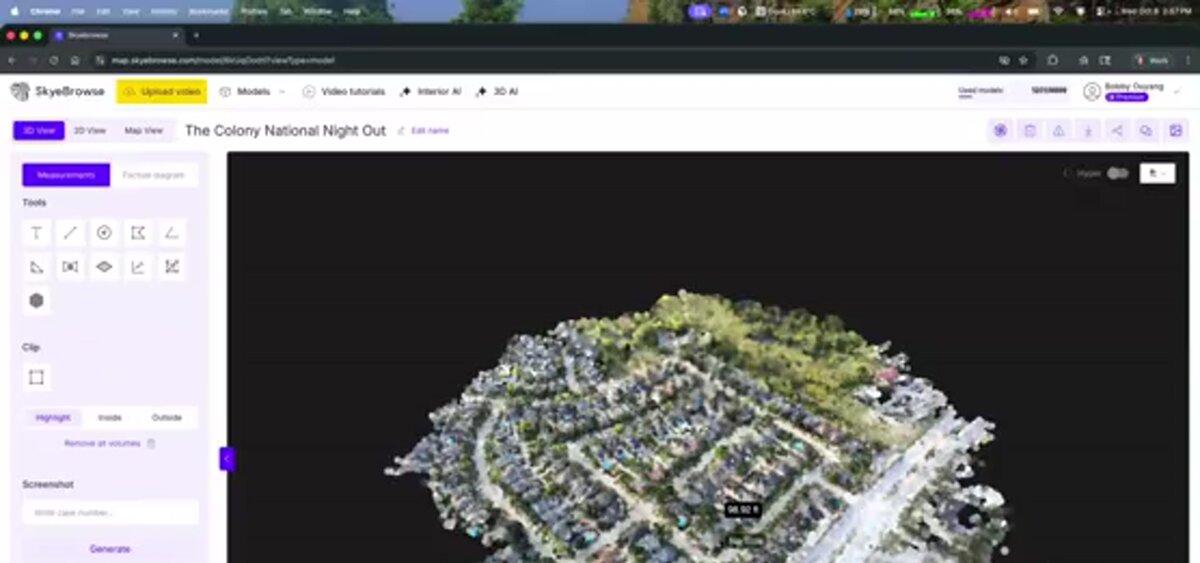

- SkyeBrowse's cloud videogrammetry platform converts drone video to 3D models and orthomosaic maps without desktop processing hardware, making sub-inch-accurate reality capture accessible to field teams with a standard drone.

Contents

- What is reality capture and how does it work?

- What are the main reality capture methods?

- How accurate is reality capture data?

- What is reality capture used for in construction?

- What is the fastest way to capture a scene in the field?

- FAQ

What is reality capture and how does it work?

Reality capture is any process that collects spatial measurements from a physical environment and converts them into a digital model. The source data can be laser pulses (LiDAR), overlapping photographs (photogrammetry), structured light patterns, or continuous video frames (videogrammetry). The output is always a set of georeferenced spatial data: a point cloud, a 3D mesh, or a 2D orthomosaic map that accurately represents real-world geometry.

At its core, every reality capture workflow follows three stages: data collection in the field, geometric reconstruction in software, and export of deliverables for downstream use. What differs is the sensing technology and processing path.

LiDAR (Light Detection and Ranging) fires laser pulses and measures the time-of-flight to calculate distances, producing dense point clouds even in low-light conditions. Photogrammetry identifies matching features across dozens or hundreds of overlapping photographs and uses triangulation to compute 3D coordinates. Structured light scanning projects known light patterns onto a surface and measures deformations to build high-detail geometry — common for small-object or indoor industrial work. Videogrammetry uses the same photogrammetric math but sources its input frames from video rather than individual stills, dramatically reducing field time because a single flight or walk-through generates thousands of usable frames automatically.

The outputs of any of these methods feed directly into digital twin technology platforms, GIS systems, BIM workflows, or legal evidence packages — all sharing the same underlying spatial data format.

What are the main reality capture methods?

The four primary reality capture methods are LiDAR, photogrammetry, structured light scanning, and videogrammetry. LiDAR produces the densest point clouds and works in poor lighting. Photogrammetry yields photorealistic textures from overlapping images and is the most widely deployed drone method. Structured light scanning excels at close-range industrial or heritage subjects. Videogrammetry offers the fastest field-to-model turnaround by deriving frames from continuous video.

Each method has a distinct accuracy ceiling, hardware cost, and processing time, which makes "best method" highly situational.

LiDAR systems mounted on drones, mobile ground platforms, or fixed tripods can achieve point densities exceeding 1,000 points per square meter and sub-centimeter accuracy when paired with high-grade IMU/GNSS. The USGS 3D Elevation Program uses airborne LiDAR as the primary data source for national elevation datasets precisely because of this consistency. The tradeoff is cost: quality airborne LiDAR payloads start at $15,000–$100,000+ and require skilled operators.

Photogrammetry remains the dominant drone-based method for large outdoor scenes. Overlapping nadir (straight-down) and oblique images are processed through structure-from-motion (SfM) algorithms to build dense point clouds and textured 3D meshes. The ASPRS Positional Accuracy Standards for Digital Geospatial Data define the accuracy tiers (Class 1 through Class 3) that photogrammetric drone surveys must meet for professional and engineering use. Ground control points (GCPs) pushed into the scene before the flight improve absolute accuracy significantly. See our guide to drone 3D mapping for a full walkthrough of the photogrammetric mapping workflow.

Structured light scanners — such as those used in industrial quality control, forensic artifact documentation, and heritage preservation — project a known pattern (grids, stripes, or coded dots) and detect deformation on the surface. Range is limited (typically under 5 meters), but accuracy can reach 0.01 mm, making it unmatched for small, high-detail subjects.

Videogrammetry is photogrammetry derived from video frames rather than discrete triggered shots. A single drone flight capturing 4K or 8K video at 30 fps generates thousands of input frames automatically — no pre-planned image overlap grid required. This cuts field time to a fraction of a traditional photogrammetric mission and makes the approach highly practical for urgent deployments: crash reconstruction scenes that must be cleared in an hour, post-storm insurance assessments, or active construction sites where work cannot stop for a long drone mission. The tradeoff is that video frames carry more motion blur and compression artifacts than single-shot stills, which is why processing algorithms and output resolution tiers matter.

For an overview of passive image-based methods and how they relate to orthomosaic outputs, the photogrammetric theory is largely shared across both photogrammetry and videogrammetry workflows.

How accurate is reality capture data?

Reality capture accuracy depends on three factors: the sensing technology, the density and quality of ground control, and the processing resolution. Well-controlled aerial photogrammetry or videogrammetry can reach 0.1–0.25 inch (2–6 mm) horizontal and vertical accuracy at the scene level. LiDAR in ideal conditions can do better. The ASPRS and NIST both publish standards that define what accuracy claims must be measured and reported against to be defensible in professional or legal contexts.

Accuracy terminology in reality capture is frequently misused. NIST's Guide to the Expression of Uncertainty in Measurement establishes that any accuracy figure must include a confidence level and a measurement method to be meaningful. A claim of "1 cm accuracy" without specifying whether that is RMSE at checkpoints, 95th percentile error, or absolute vs. relative accuracy is not a verifiable claim.

For drone-based reality capture specifically, the ASPRS Positional Accuracy Standards provide the industry benchmark. A Class 1 product requires that horizontal RMSE at independent checkpoints not exceed the nominal ground sample distance (GSD) of the imagery. In practice, a 1 cm/pixel GSD photogrammetric survey targeting Class 1 must achieve horizontal RMSE at or below 1 cm at checkpoints — achievable with well-placed GCPs and RTK/PPK georeferencing on the drone.

SkyeBrowse's tiered processing model maps directly to these accuracy levels. The Lite processing tier targets accuracy around 2–6 inches — sufficient for insurance claims and situational awareness. Premium processing at 8K resolution delivers accuracy around 0.25 inch, appropriate for engineering measurements and legal evidence. Premium Advanced processing at 16K with AI moving-object removal reaches approximately 0.1 inch, suitable for court-admissible accident reconstruction deliverables.

The companion technology that underpins measurement reliability is sensor fusion: pairing visual data with GPS telemetry. SkyeBrowse accepts .SRT (DJI) and .ASS (Autel) telemetry files alongside the video upload to improve georeferencing without requiring manual GCP placement — a workflow that drone LiDAR systems handle differently through direct-georeferencing hardware onboard the sensor.

What is reality capture used for in construction?

In construction, reality capture is used to document existing conditions before work starts (existing-conditions surveys), verify that completed work matches design intent (as-built documentation), and monitor work-in-progress against schedule (construction progress monitoring). 3D reality capture in construction reduces rework costs by catching dimensional deviations before they are buried under the next phase of work.

The construction industry is the single largest commercial adopter of reality capture. The driving use cases are:

Existing conditions surveys — Before demolition, retrofit, or new construction adjacent to existing structures, a reality capture survey creates a georeferenced baseline. Clash detection against a BIM model can then identify conflicts before they become expensive field problems.

As-built documentation — Post-construction reality capture of the finished structure creates a permanent spatial record. For infrastructure projects, this record is often contractually required and may be referenced decades later for maintenance, expansion, or insurance purposes. Drone-based methods are particularly efficient here because they cover large horizontal extents — a warehouse floor, a road surface, a utility corridor — in a single flight.

Progress monitoring — Weekly or monthly reality capture flights of an active construction site let project managers compare current conditions against the BIM model or schedule. Earthwork volumes can be computed by differencing successive point clouds or orthomosaic surfaces, providing data-driven progress reports without manual surveying.

Reality capture construction workflows increasingly use drone videogrammetry for progress monitoring because the field time is minimal — a 15-minute flight over a mid-size jobsite generates the data needed for a complete 3D model — while LiDAR remains the choice for millimeter-precision as-built surveys of mechanical, electrical, and plumbing systems indoors. For the full breakdown of drone-based construction documentation, see our construction drone services guide.

The connection to digital twin technology is direct: a regularly updated reality capture dataset is the data source that keeps a construction digital twin current. Without repeat reality capture, a digital twin is static and becomes inaccurate as the physical site evolves.

What is the fastest way to capture a scene in the field?

Videogrammetry is the fastest reality capture method for outdoor and aerial scenes. A drone video flight over a site can generate a complete, measurement-ready 3D model in minutes after upload, with no pre-planned image grid, no GCP placement during the flight, and no desktop processing hardware required. For teams that need spatial data fast — first responders, insurance adjusters, construction superintendents — videogrammetry closes the gap between field capture and actionable data to under an hour in many workflows.

Speed matters differently depending on the use case. For accident reconstruction, a scene may need to be cleared for traffic within 90 minutes of arrival — leaving almost no time for a traditional photogrammetric survey with 70%+ overlap images and GCP placement. For storm damage insurance assessment, an adjuster covering 20 properties in a day cannot afford a 45-minute drone mission per roof. For a construction superintendent, the value of progress data is inversely proportional to how long it takes to get it.

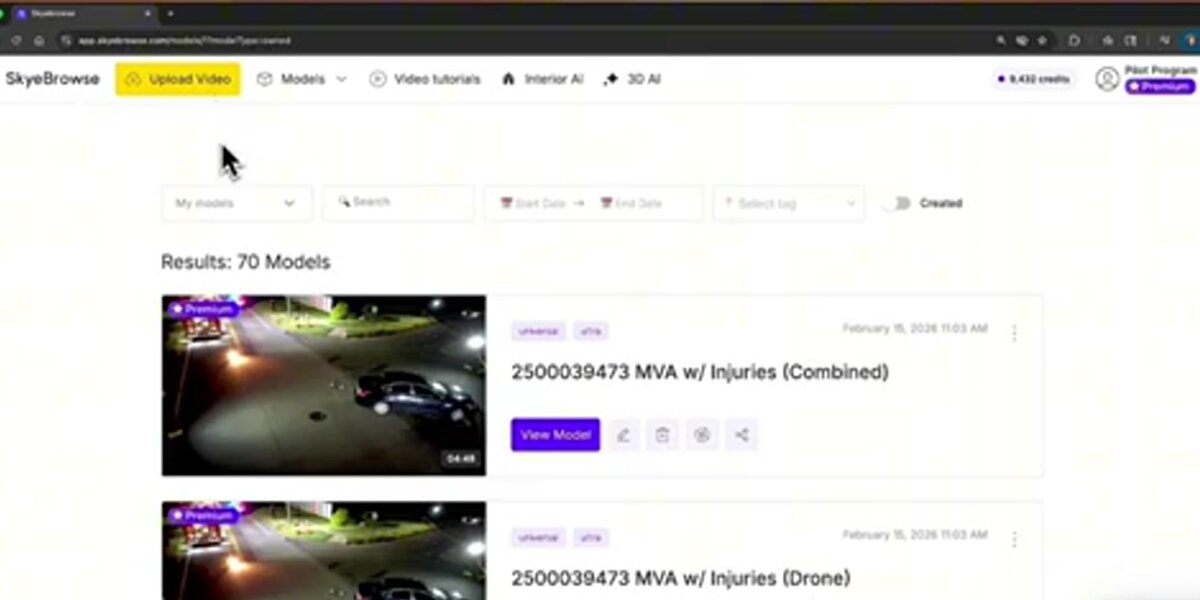

SkyeBrowse was built specifically to eliminate the processing bottleneck. Video files are uploaded to the cloud processing platform at app.skyebrowse.com using the Universal Upload workflow or the SkyeBrowse Flight App. The platform accepts .MP4 and .MOV files from any supported drone and pairs them with GPS telemetry automatically. Processing happens in the cloud — no workstation, no GPU, no wait for a specialist to run the job. The resulting deliverables include a 3D mesh (.GLB), point cloud (.LAZ), and georeferenced orthomosaic (.GeoTIFF), all exportable for use in GIS, CAD, or legal documentation workflows.

More than 1,200 agencies and organizations globally — spanning law enforcement, fire departments, insurance carriers, and construction firms — have adopted SkyeBrowse's cloud videogrammetry because it removes the expertise barrier that traditionally kept fast-turnaround 3D capture out of reach for field personnel. A patrol officer with a department DJI drone can produce a court-ready 3D crash scene model. An insurance adjuster can deliver a georeferenced roof model before leaving the property. A site superintendent can have an updated progress model on their laptop before the morning standup.

For teams considering the comparison between SkyeBrowse's videogrammetry approach and RealityCapture by Epic Games (the photogrammetry software product), see our dedicated SkyeBrowse vs. RealityCapture comparison for a feature-by-feature breakdown.

FAQ

What is reality capture?

Reality capture is the process of collecting spatial data from a physical environment and converting it into a precise digital representation — a 3D model, point cloud, orthomosaic map, or digital twin. It covers multiple technologies including LiDAR, photogrammetry, structured light scanning, and videogrammetry.

What is the difference between reality capture and photogrammetry?

Photogrammetry is one method within the broader reality capture category. It reconstructs 3D geometry by analyzing overlapping photographs. Reality capture is the umbrella term that also includes LiDAR, structured light scanning, and videogrammetry — each producing a spatial dataset that represents a physical environment.

Is RealityCapture software the same as reality capture technology?

No. RealityCapture (capital R, capital C) is a proprietary photogrammetry software product developed by Capturing Reality and now owned by Epic Games. Reality capture (lowercase) is the broader technology category encompassing any method — LiDAR, photogrammetry, videogrammetry, structured light — used to digitize the physical world into spatial data.

What industries use reality capture?

Reality capture is used across construction and engineering (as-built documentation, progress monitoring), public safety (accident reconstruction, crime scene mapping), insurance (property damage claims), real estate (virtual tours), and infrastructure inspection (bridges, utilities, rooftops).

How long does reality capture take?

Field capture time varies by method and scene size. A drone videogrammetry flight over a standard crash scene or single-building rooftop takes 5–15 minutes of flight time. Processing time depends on the platform: cloud-based videogrammetry platforms like SkyeBrowse typically return a complete 3D model within minutes of upload, while desktop photogrammetry software processing large image sets can take hours. LiDAR data collection is fast but post-processing and point cloud cleaning add significant time for complex scenes.